Greetings everyone!! Yes, it’s been a while…sorry about that. Been busy with life…I’ll just leave it at that.

So at work, for the last couple of days, the Citrix admin has been having an issue with users at one of our larger remote sites…seems they are intermittently unable to connect to a Citrix server after being redirected by the Citrix license server. He brought me into the problem this morning once he realized the problem was only occurring at this one site. Very interesting!

First step was to capture some of the traffic and see what’s going on. I have a Linux server at the Data Center running Snort, watching most all of the traffic into and out of the Data Center, so this comes in very handy when I need to capture some traffic. I started a TCPDUMP on the server, specifying the interface in question that has the traffic from the remote site, and dumped it into a file…the command was…

tcpdump -vv -nn -s 0 -i eth3 -w /root/pcapfiles/citrix_issue.pcap host 10.10.21.223

The Citrix admin was remoted onto a PC at the site, and he attempted to connect into Citrix. After I captured the data, I moved the file to my laptop and opened it up with Wireshark…I saw the 3 way handshake (SYN – SYN/ACK – ACK), and then some data going back and forth, but the session never started up and it timed out. Weird.

I also captured a Wireshark session from my own laptop so I could see how it was supposed to work. I still could not see what the issue was…very strange.

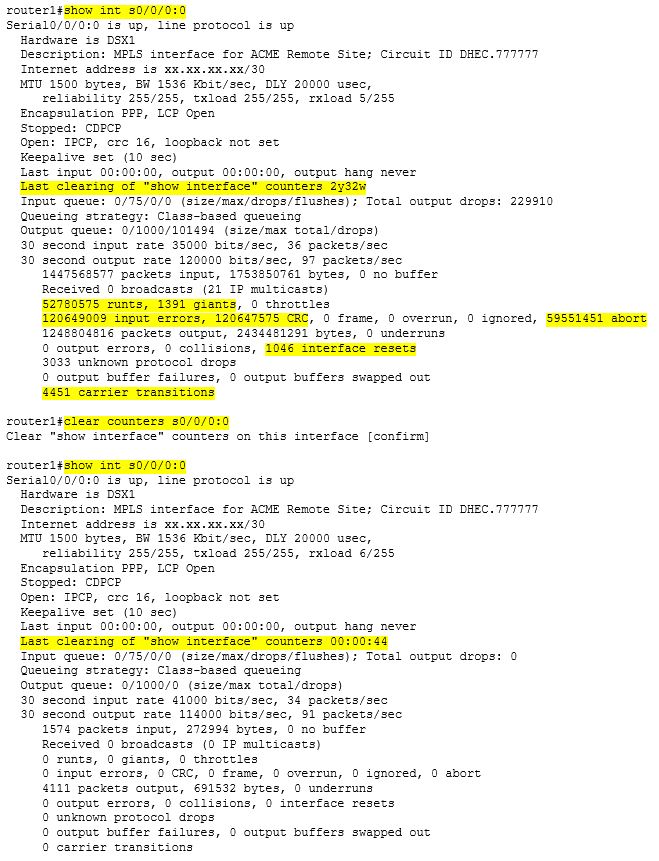

I then decided that I needed to see packet captures from both sides of the conversation. So I remoted into the PC and installed Wireshark on it…I then started up Wireshark on the PC and TCPDUMP on my Linux server, and then tried the Citrix client again. After it timed out, I opened up both PCAP files in Wireshark and examined them side by side, packet by packet. On the PC, I saw this…(PC = 10.10.21.223, and Citrix server = 10.12.1.122)…

I found the matching packet on the capture from the Data Center…

Say what????? I see a completed 3-way handshake…including the SYN/ACK from the server back to the PC (which was not in the PC capture), and another packet from the PC to the server completing the handshake…also not in the PC capture. VERY bizarre!!!

Then it hit me…Riverbed!! The only way a handshake could be completed in this manner was from another device sitting in-between…and we do have that. We have Riverbed devices sitting at the Data Center and at most large remotes sites handling WAN optimization. Since no other sites were reporting any issues, then the Riverbed at this remote site must be “kinked”. So we restarted the Citrix application optimization process on the Riverbed, and that fixed everything!

VERY cool…and very interesting. This took some time to figure out, but once I got visibility at both ends of the conversation, the answer was easy. Remember…Wireshark is your friend.